The Ideal Software Development Experience

What might building software look like in the Star Trek universe?

Science fiction often shapes how we imagine the future. While it rarely predicts outcomes precisely, the visions sci-fi offers can still be revealing. Imagining how software development might work in Star Trek, for example, is a useful thought experiment.

Star Trek represents a technological ideal, at least relative to where we are today. It invites a simple question: if you had access to Star Trek-level technology, how could software development work?

Importantly, in the Star Trek universe, many non-technological constraints remain intact. People are still valuable, but also flawed in familiar ways. What changes most dramatically is the quality of the tools they use.

Foundational Challenges

In this Star Trek world, we will assume that we have a powerful AI that can use available technologies to build whatever software we envision very quickly. This, however, is not the end of the story.

Even the most powerful AI does not alleviate all of our problems. If you want to build something, you still face a number of challenges:

Iteration: Your vision for your application will not be detailed or accurate enough when you start building. You need to be able to explore ideas quickly, with high quality feedback, as you build and experiment your way toward a high quality product.

Complexity: Software products become complex very quickly. How do you manage complexity so that you can ensure a quality user experience no matter what the end user is doing within this complex application?

Collaboration: Even if you are building alone, you are working with an AI. The communication challenges for that collaboration are significant and only become more challenging the more people or AI agents you work with.

These dynamics matter, regardless of how capable AI becomes. Even if AI eventually builds large, complex systems with minimal guidance, tools that support iteration, complexity management, and collaboration do not become less important. In fact, they become more important as you are able to build more complex software more quickly. In doing so, you introduce more and more small decisions that will shape the end user experience.

Significant projects are shaped by thousands of small decisions. Even if an AI can make reasonable choices at each step, those decisions are subjective, and each one subtly steers the product. Human involvement allows those choices to be guided intentionally.

Over time, the cumulative effect of many small decisions can be the difference between an average product and a great one. When software is built for people, human judgment grounded in lived experience remains essential.

For the purposes of this piece, we will assume there is real value in people collaborating with AI throughout the lifecycle of a product. Even if an AI produces a strong first version, humans still add value by refining, guiding, and evolving what gets built.

Iteration

Anyone who has worked on a significant creative project recognizes the value of iteration. You want to be able to run experiments quickly, observe how changes feel or perform, and improve based on what you learn.

Software has always been unusually well suited to this kind of iteration. Changes can be made at almost any point, even after a product has shipped.

Fast iteration depends on quickly launching an experiment and getting high-quality feedback you can learn from to, in turn, shape the next experiment.

Complexity

Software becomes complex very quickly. Before long, it is impossible to predict how every part of a system will respond to a given change. One effective way to manage this complexity is through decomposition and perspective. You need to be able to zoom in on individual components and zoom back out to understand how those pieces fit together.

Much like Tony Stark working with JARVIS, you might isolate a component, reason about it in isolation, simulate how it behaves under different conditions, make changes, and then observe how those changes integrate back into the larger system.

Equally important is understanding how parts of the system interact. Changes to lower-level components often ripple outward, affecting many areas of an application. Managing complexity requires visibility into what is impacted and how.

Collaboration

One of the hardest challenges in any creative project involving multiple people is communication. You may have a clear vision, but communicating that vision with perfect fidelity is effectively impossible. Language is inherently ambiguous, and that ambiguity increases as you attempt to describe a more complex vision.

This problem is amplified in software, where specific experiences may only occur under certain state conditions. To change such an experience, you must describe how to put the application in the correct state so that you can describe the necessary changes, execute on them, and see the results.

While AI has broad knowledge, it typically lacks deep, accumulated context about a specific codebase that coworkers might have. Even with coworkers, or in a Star Trek world where AI has excellent memory, ensuring everyone involved in the project has the same understanding requires a great deal of effort.

The Ideal Development Experience

Given these goals, what would the ideal experience look like?

We are imagining a Star Trek-like world, so much of what follows is aspirational. Still, it is a useful exercise.

Imagine a very powerful AI that can quickly bring any concept to life. Because it is fast, you can begin with vague, imperfect descriptions. That is fine. If the result is wrong, it can be discarded or refined at a very low cost.

In order to iterate on this initial attempt, you need to first understand what was built. Regardless of the interface (e.g. a screen, a hologram, etc), you need to be able to interact with the application and put it through its paces. Enter simulation.

The ideal tool would use AI to create simulations of every part of the application. It would generate representative data, place the system into different states, and either capture the resulting behavior or run the software live with that data in place. It would essentially demo the application to you, walking through what was built, and showing how it all works under different data scenarios.

You would also be able to isolate individual components and simulate them directly. This makes it easier to iterate on specific parts of the application in isolation and to reason about how they behave under different conditions.

You can zoom in on components, interact with them in specific states, and zoom back out to see how changes affect the system as a whole. When you notice an issue, the shared context of an isolated component allows you to ask the AI for targeted changes without first explaining the entire path to the desired outcome.

As changes are made, the AI can quickly demonstrate the results via these simulations across data scenarios so you can feel confident that an intended change does not have unintended consequences elsewhere in the application or under different data scenarios.

This creates a tight feedback loop. You can explore, adjust inputs, experiment, evaluate results, and repeat. If the tool and the AI are fast enough, this feedback loop allows you to shape both the product and its user interactions with confidence.

The ability to fluidly zoom in and out and to run rapid experiments at multiple levels of abstraction across a range of data scenarios is at the core of the ideal experience.

Other Important Considerations

Documentation

High-quality collaboration depends on clear communication, including communication with your past self. Why decisions were made and how the system is meant to work are often not obvious from reading code or using the product.

With sufficiently powerful AI, documentation can become a continuous process rather than a separate task. As decisions are made, the AI can record them automatically and make them easy to retrieve when you return to a specific part of the system. This creates a clear narrative explaining how and why the software works the way it does, preserving shared context as complexity grows.

Maintenance & Testing

Building software is fun. Maintenance is not. Yet, maintenance is essential to any high-quality system. As software evolves, it becomes critical to avoid introducing unexpected changes caused by hidden interdependencies.

Automated testing remains one of the most effective ways to manage this risk. Even in a future where AI is highly capable, there is little value in having it manually check for regressions when automated tests can exercise thousands or even millions of scenarios in seconds.

AI can, however, be very effective at generating and expanding test coverage. As software is developed and explored, correct behaviors can be captured. If the same scenarios later produce different results, you can be alerted and decide whether the change is intentional or unexpected.

This allows complex systems to evolve without sacrificing speed or confidence.

CodeYam: Introducing Memory, Editor, & Labs

This vision is what we are working toward at CodeYam.

CodeYam Memory

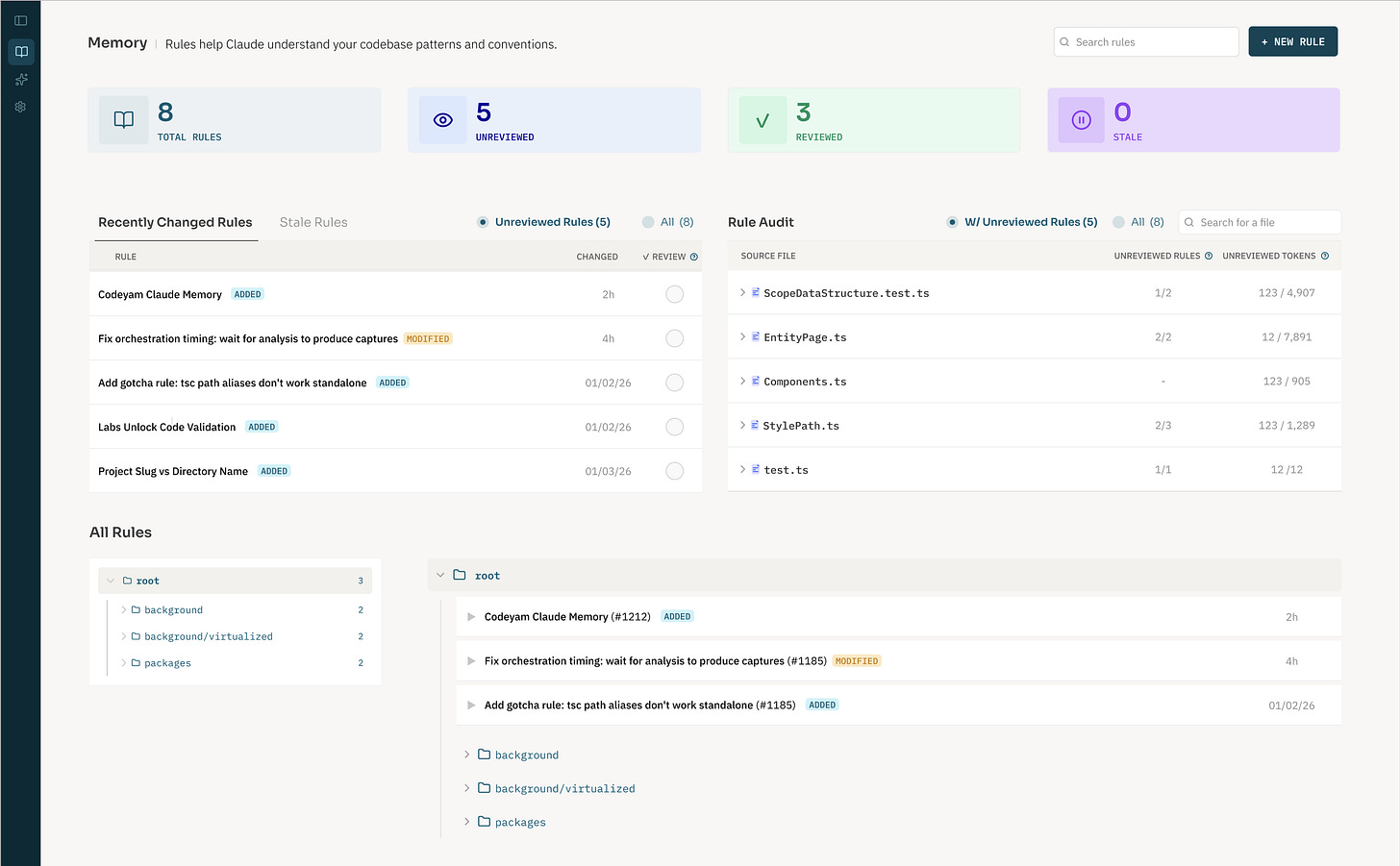

Our first publicly available feature is called CodeYam Memory and focuses on self-reflective memory creation and tracking (essentially the continuous documentation process we described above).

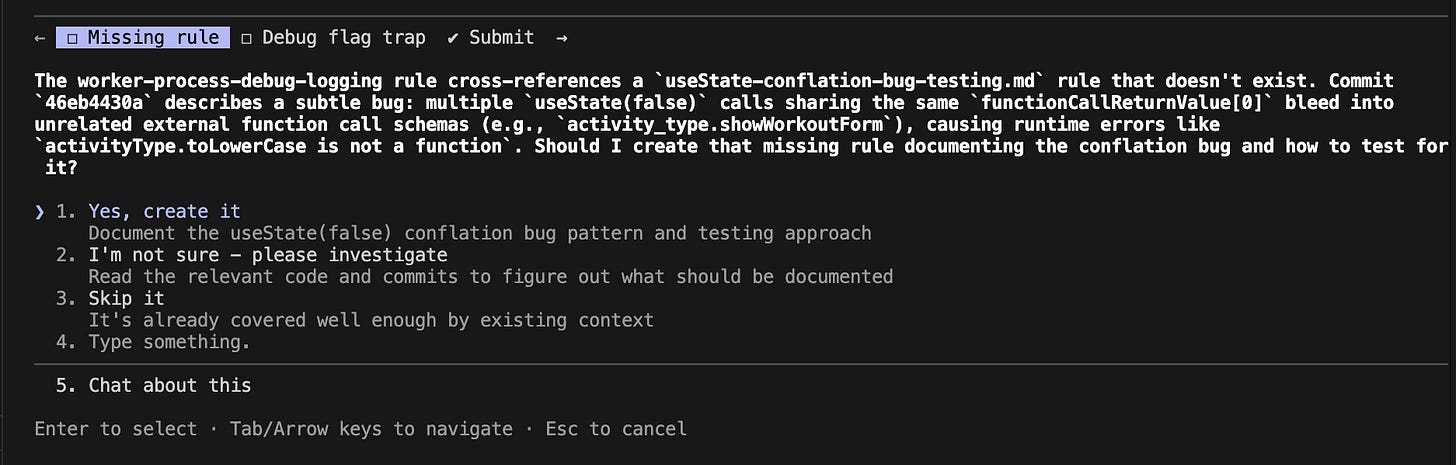

During a working session, the AI (Claude Code, to start) reviews what it did and identifies areas of confusion, important architectural decisions, or context that would be valuable in the future. These insights are saved as memories and associated with relevant parts of the codebase.

You can review these memories, see which ones have changed, and explore which ones apply to a given area of the system. High-quality memories can be marked as reviewed so you know which context you have already validated.

CodeYam Editor

CodeYam Editor is a new product in early access and the beginning of our push toward the ideal development experience. It introduces a new software development workflow where code and data are developed in conjunction, creating simulations that drive the development process.

If you’re interested in trying this out, sign up for our waitlist and mention “CodeYam Editor” specifically.

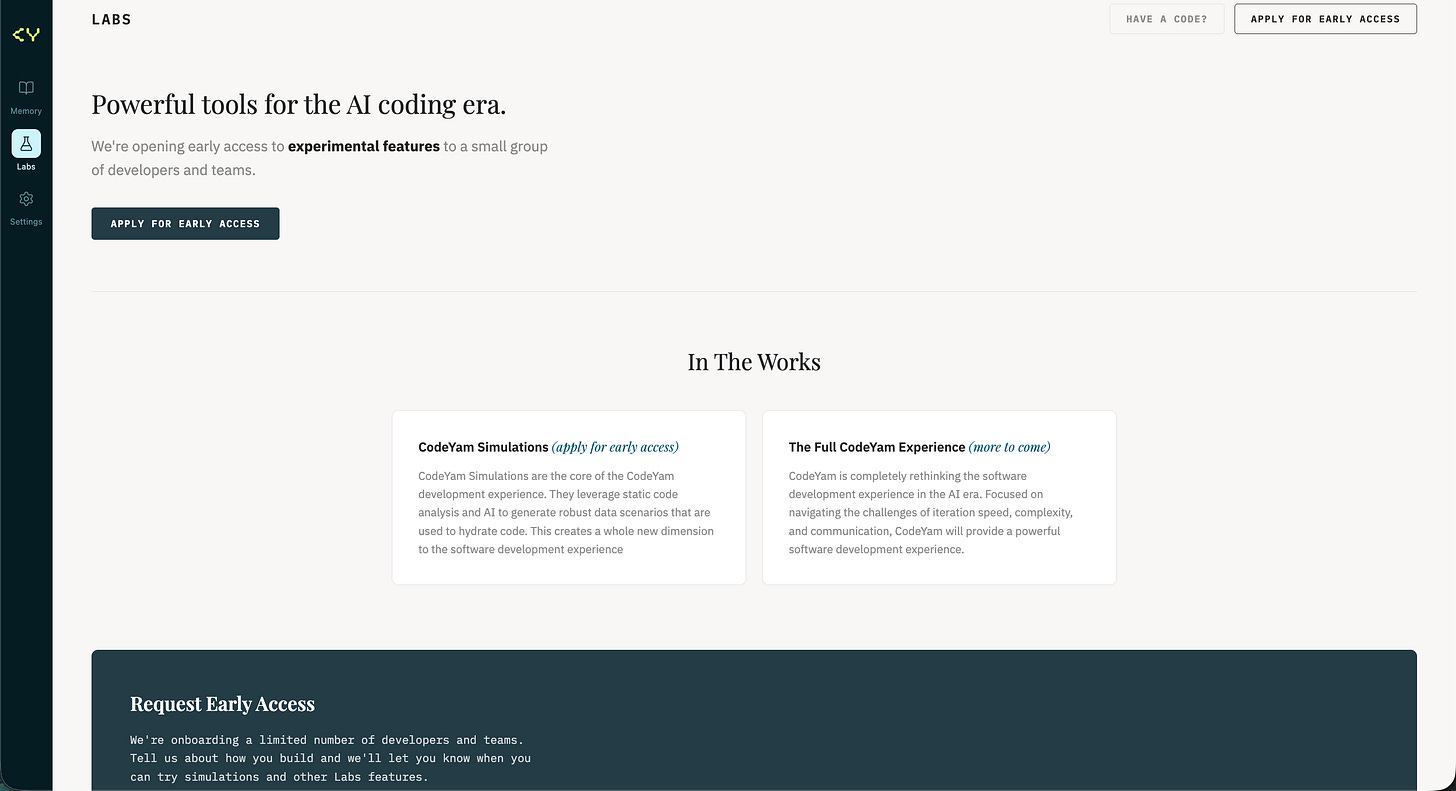

CodeYam Labs & Simulations

Simultaneously, other early access functionality is available to select users in the “Labs” section of the CodeYam Dashboard.

These Labs features includes the ability to edit, create, and deconstruct software and isolate any method or function. Once isolated, a function can be simulated under different data scenarios, interacted with directly, and modified with AI assistance.

When a simulation behaves as expected, it can be captured and used in a variety of ways, including as part of a test suite or as a way to evaluate or demo the application. As simulations accumulate, you gain the ability to explore the system holistically, zooming in and out while collaborating with AI to make changes.

This is an early step, but it points toward a development experience where understanding, iteration, and confidence scale alongside complexity.

Why now?

AI has changed the economics of software creation. Generating code is no longer the primary bottleneck. What remains hard is the challenges of communicating your vision, iterating on it as you learn more, and managing and maintaining the complexity of it as it grows. Even with increasingly powerful AI, we benefit by having excellent tools to support these challenges.

A Star Trek-level future does not eliminate the need for these tools. It amplifies it. The more powerful our AI collaborators become, the more important it is to have interfaces that keep humans oriented, intentional, and in control.

CodeYam introduces simulation to create a powerful, comprehensive AI-native software development experience for experienced developers and aspiring builders alike.